Autopilot

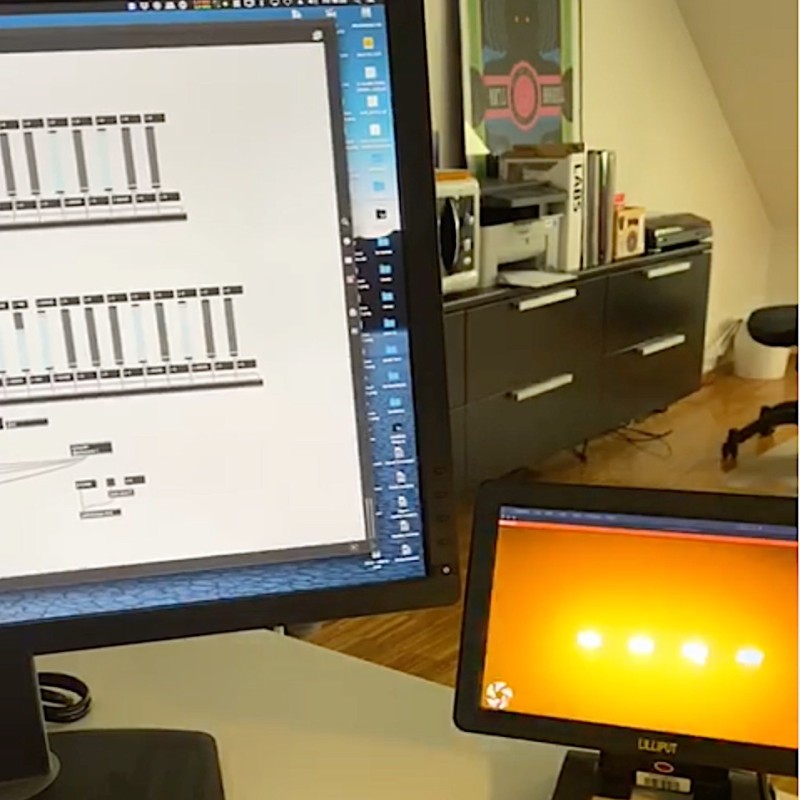

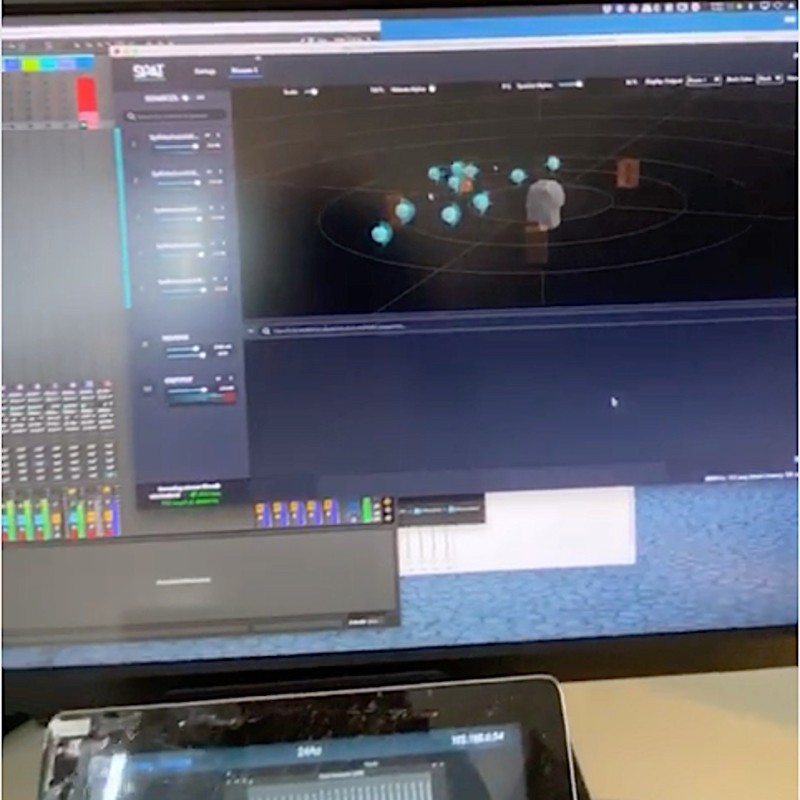

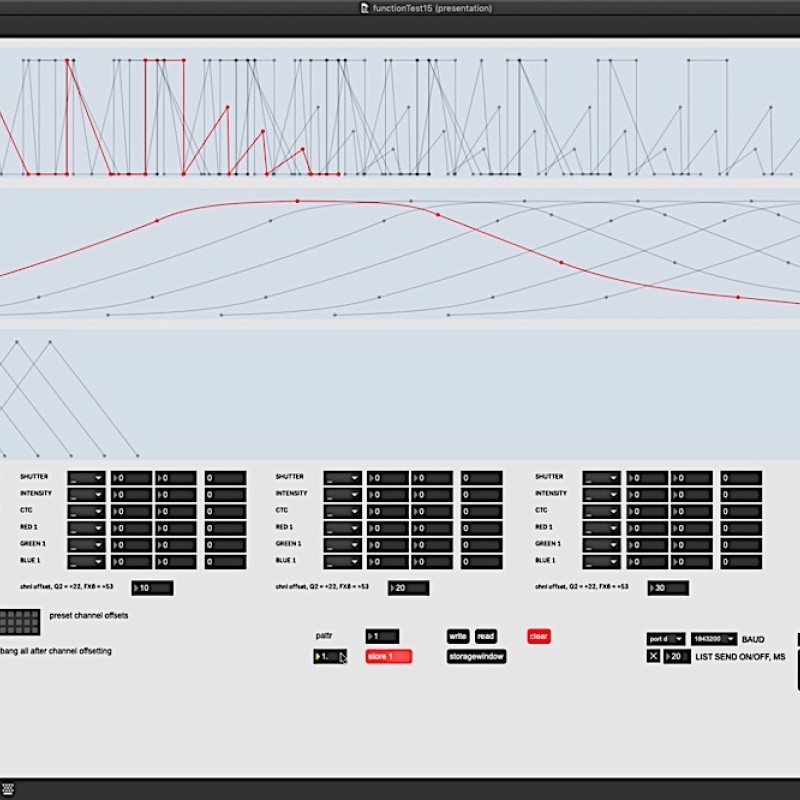

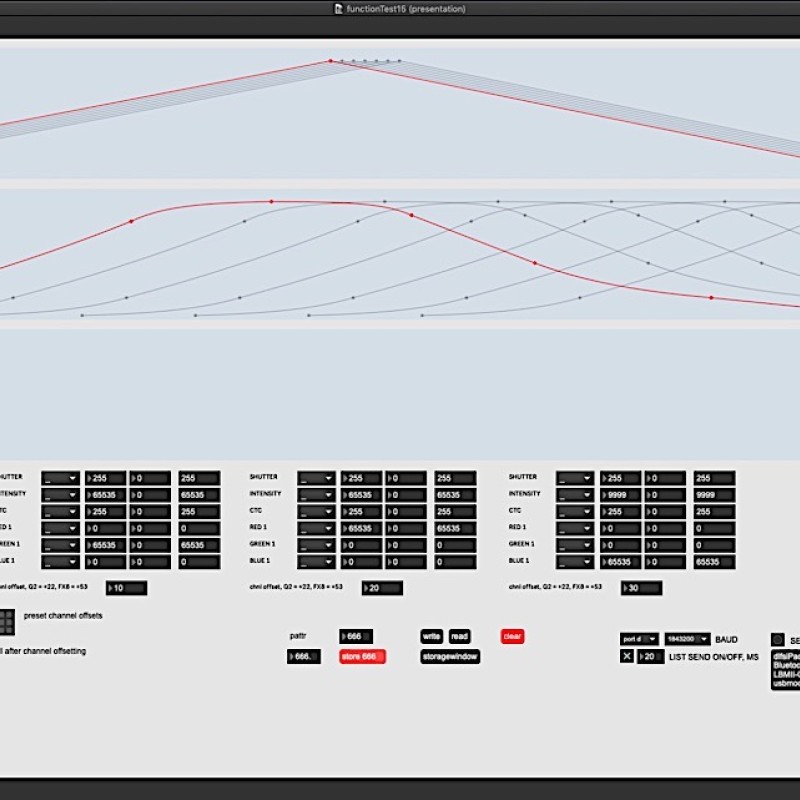

The aim of the project Autopilot was to research the development of an intuitive composition engine for spatial, kinetic and audiovisual installations. An engine that brings together diverse hardware and software components for controlling audio, video, light, and machines (kinetics) in a control and composition model, and which also simplifies the control of large numbers of parameters. This engine should enable composition making with audio, video and objects in an intuitive way, without losing control.

The research mainly focused on:

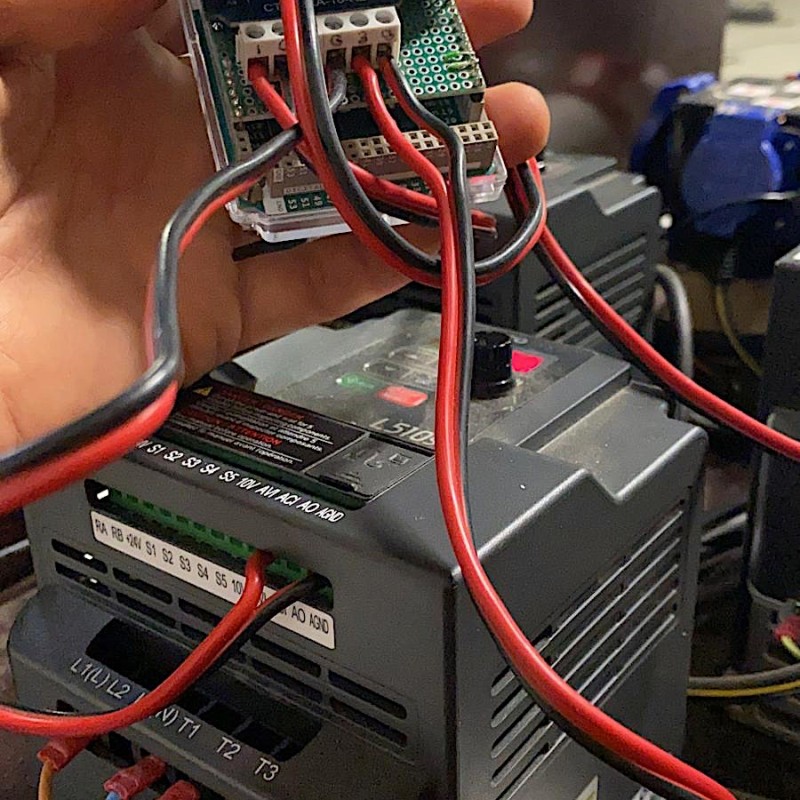

• System integration: how do you integrate tools for controlling motors (motion), light and sound in order to compose?

• From manual to 'autopilot': autonomy, automation, machine learning

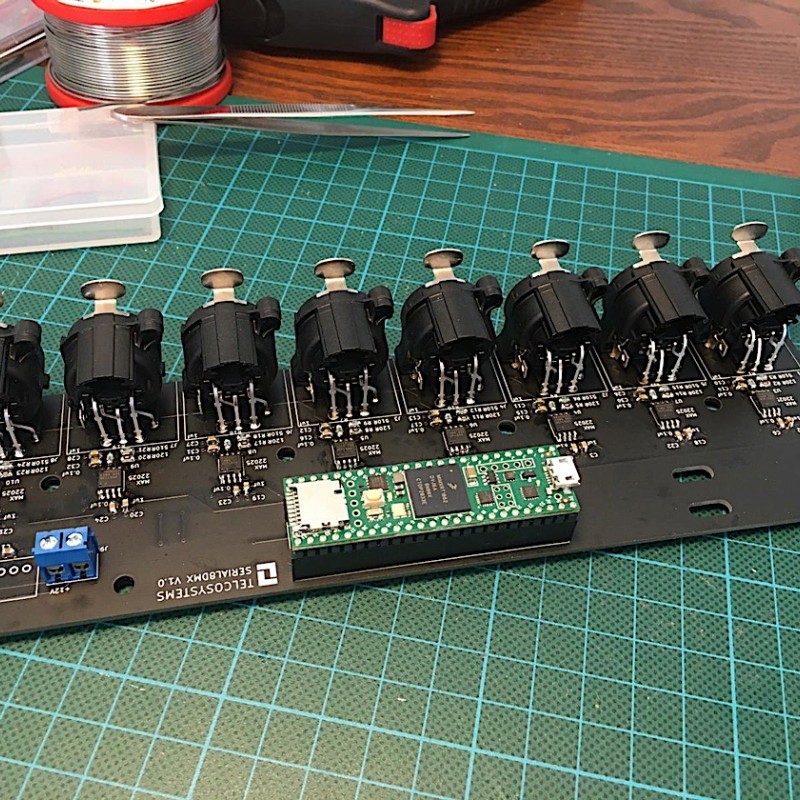

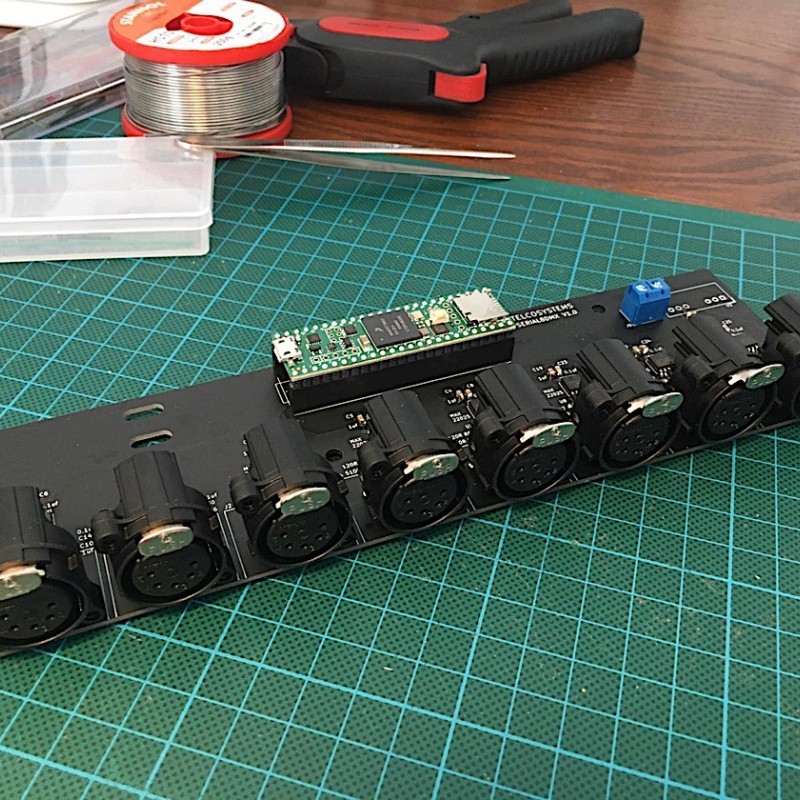

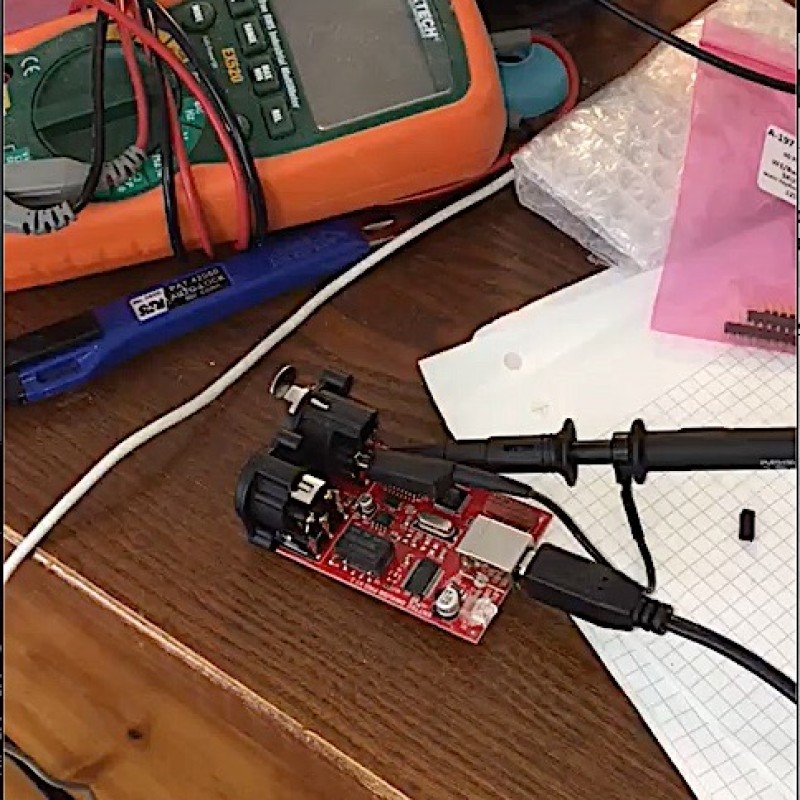

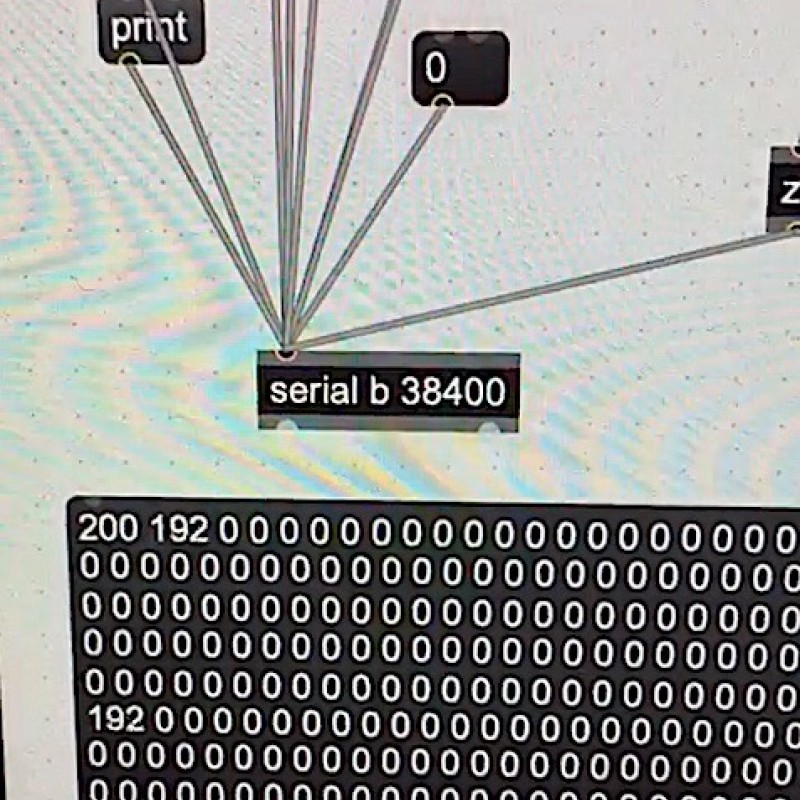

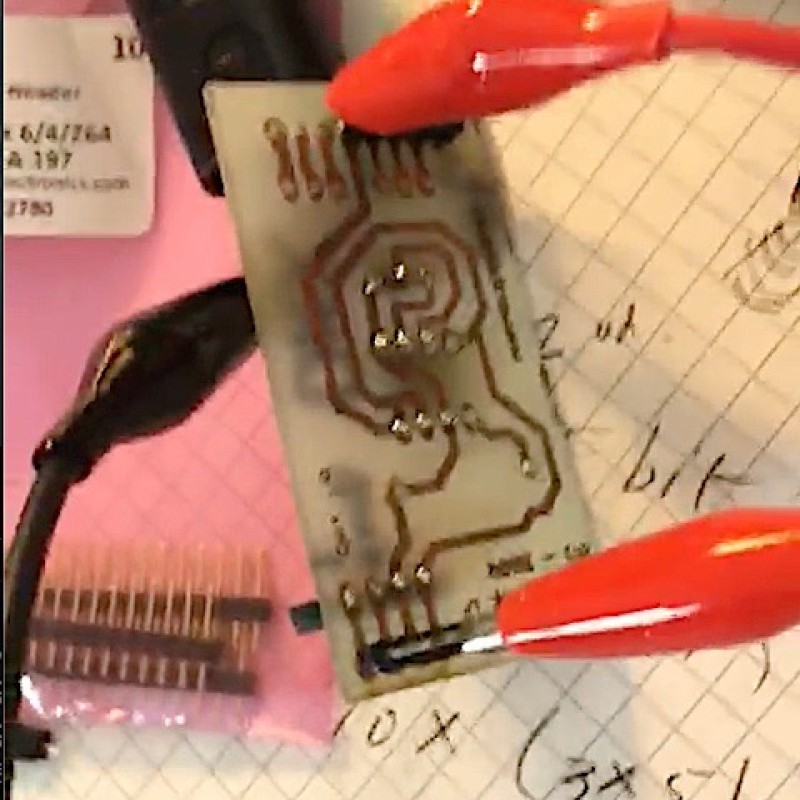

This research subsequently led to the development of a number of prototypes, and ultimately resulted in a custom DMX device that is suitable for controlling audio, video, light and machines (kinetika) at high speed. Together with a series of patches, we have thus developed a blueprint for a new composition engine.